Introduction

The industry has been talking quite a lot about low latency video delivery, covering why it is so important and what new protocols can be used to further reduce the latency. Either to bring the latency on par with broadcast or even push it lower.

With the desire for traditional TV service providers to migrate away from IPTV or QAM towards an all-ABR-based delivery or with OTT providers desiring to offer linear and live TV services, a new set of requirements arise.

As such, merging the quality of experience and reliability of IPTV/QAM with the convenience and flexibility of delivering the TV service towards retail devices becomes the goal.

Zapping in the IPTV/QAM days

One of the areas where vendors and operators have historically invested a lot when they migrated from analog to digital is in reducing the zap time.

The zap time is the total duration of time from which the viewer changes the channel using a remote control to the point that the picture of the new channel is displayed.

The delays when changing the channel can be caused by several different factors. Consequently, there are network factors, transport protocol factors, buffering and decoder factors. Typically this results in channel zaps of one to two seconds. Such high zap time is generally negatively impacting the quality of experience for the users.

Hence, we have witnessed the advent of the so-called Fast Channel Change (FCC) solutions.

There are largely two different FCC approaches.

The server-based FCC solution leverages the RTP/RTCP protocol and is specifically used in IPTV deployments (IP Multicast based delivery). When zapping to a new channel, after leaving the previous channel, the device requests a unicast stream from an FCC server . This server continuously receives and caches for a limited time the IP multicast streams of all the TV channels, and hence always has access to a recent I-frame for all the channels. The data in the unicast stream can be sent at a higher speed than the nominal streaming rate of the video, allowing to quickly fill the video buffer and also allowing the device to catch-up again with the multicast, which the device will join when being instructed to do so by the FCC server.

To the end-user, this process results in a fast (sub-second) and flawless channel change experience.

A Fast Channel Change solution can also be created for broadcast (non-IPTV) and cable deployments, resulting in similar low zap times but with a transition period – in the order of a few seconds – the video will first be displayed at a lower quality. In this approach, upon channel change, the device will join not only the normal channel stream, but also a companion stream. This is a broadcast stream containing the same channel, but encoded with a much shorter Group of Pictures (GOP) size and generally lower quality.

Because of the lower quality encoding, it can be streamed at a lower bit rate compared to the normal quality channel.

Both FCC approaches require extra bandwidth – but only for just a few seconds – compared to a non FCC-enabled deployment. The companion stream solution provides better scalability, whereas the server based FCC solution delivers the best Quality of Experience (fast channel change without the transition period).

Zapping in ABR times

With the adoption of Adaptive Bitrate (ABR) Streaming of Linear TV services we are back to the drawing board to figure out elegant solutions to optimize the zap time.

Indeed, with default DASH or HLS implementations we are looking at worst-case zap times equivalent to half of the segment duration. With segment durations ranging between 2 and 10 seconds we are not exactly matching the sub-second Channel Change experience customers have gotten accustomed to with IPTV/Cable TV FCC.

Broadpeak in collaboration with castLabs have created a scalable Fast Channel Change solution for MPEG-DASH. Which brings the experience back on par with the IPTV/Cable TV FCC experience.

This implementation relies on an optimized packager provided by Broadpeak and an enhanced castLabs player operating in MPEG-DASH.

Fast Zap for DASH

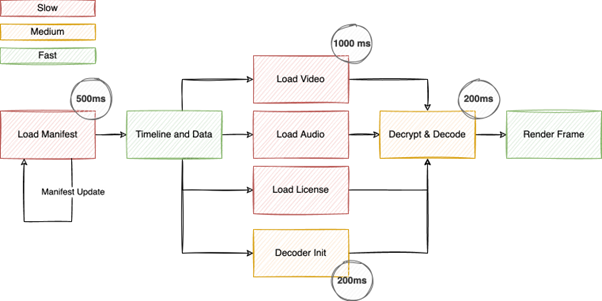

With DASH as the underlying streaming protocol for ABR based video delivery, we are faced with a few challenges to permit the player to tune into a live stream quickly. The typical workflow consists of the following primary steps:

- Load the Manifest (and update it on a regular basis)

- Load video and audio data

- Load DRM licenses and decryption key

- Initialize decoders

- Start the rendering loop to reach the target frame

Some of the steps can execute in parallel, while others need to be executed sequentially. The Manifest, for instance, needs to be loaded and parsed before video and audio segments can be loaded. The network requests for media segments as well as any license requests can be handled in parallel though. At the same time, the decoder initialisation is a prerequisite that needs to be completed before the rendering loop can start.

We identified multiple optimisations that can be applied to decrease zapping times. These improvements are generally independent and each contributes to a shorter channel tune in.

Optimisations start with pre-loading and updating manifests for more than one live channel. This permits the client to have ready to use information about more than just the currently playing channel. At the same time, manifests can be structured to avoid the need for frequent updates all together. The combination of the two can create the ability for the player to observe more than one live channel while not increasing load on the Origin server.

With Manifest data pre-loaded, the client also gains the ability to pre-fetch and store DRM licenses for the content. With a feature-rich multi-DRM backend such as castLabs DRMtoday, grouped, persistable licenses can be served and allows the player to avoid license loads during the tune in process.

At the same time, the player spends most of its time waiting for video data to arrive. This is of course limited by available bandwidth both on the client and the CDN. Based on how media segments are packaged, a limiting factor is also the fact that decodes, at least for video, need to start at specific data points in the media stream, namely the I-frame.

Although the MPEG-DASH standard evolved and introduced the concept of re-sync points to find I-frames, there is already a prominent position in each media segment that must be an I-frame: The first frame of every segment.

By leveraging low-latency streaming mechanisms such as CMAF Chunked Transfer Encoding, not to decrease the delay to the live edge, but to decrease the time it takes to load decodable media data, the zapping time can be reduced.

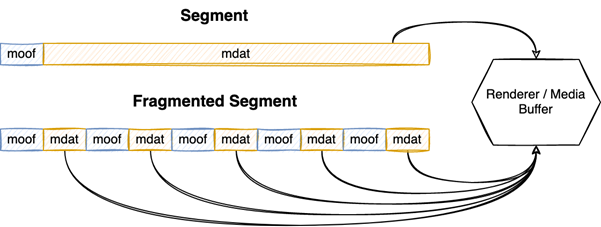

Additionally, by using fragmented/chunked segments that contain multiple moof/mdat boxes, the time it takes for the player to start the decoding process can be significantly reduced, accelerating the zapping even further.

All this can be achieved while remaining fully MPEG DASH compliant. To the same token, the stream remains backwards compatible with non fast zap optimized player implementations.

For example, if we assume a 1080p video stream with 12 Mbit/s and 4 second segments and 20 chunks, loading a segment with 25 Mbit/s of available internet bandwidth will take 2 seconds. If the target position is located at the end of the segment, the player will need additional time to decode from the first I-Frame to the target frame. As an example, with Firefox 103 on a 2019 MacBook Pro, this added another 500ms to decode the data. This gives a zapping time of around 2.5 seconds.

In contrast, with our Fast Zap implementation using 20 chunks per segment, and starting at the segment boundary, the load time to get enough data to start playback is reduced to 120 milliseconds, which is the time it takes to load the first chunk. At the same time, the decode time drops to 60 milliseconds because the decoder only has to process the initial I-Frame and start presenting the decoded frames immediately after that. This creates a zapping experience where the channel change will happen in less than 200 milliseconds.

This can further be combined with other client-side optimizations, for instance cached initialization segments or decoder instance reuse, that are implemented in the castLabs PRESTOplay player suite to create very fast zapping experiences.

Conclusion

One of the last obstacles to migrate all video services to ABR based streaming was enabling a smooth channel change experience. The gold standard was what was implemented historically for IPTV and QAM based TV services, which enabled sub-second channel changes. With the innovation by castLabs, in collaboration with Broadpeak, TV service providers and OTT service operators are now finally able to match that experience and migrate to an all-ABR based delivery system.

If you want to experience first-hand fast zapping, visit our joined Fast channel zapping demo page.

During IBC 2022 your find Broadpeak in Hall 1 booth #1B79 and castLabs in Hall 5 booth #5C55, to discuss how we can enable fast channel zapping for your customers.